EP 1108

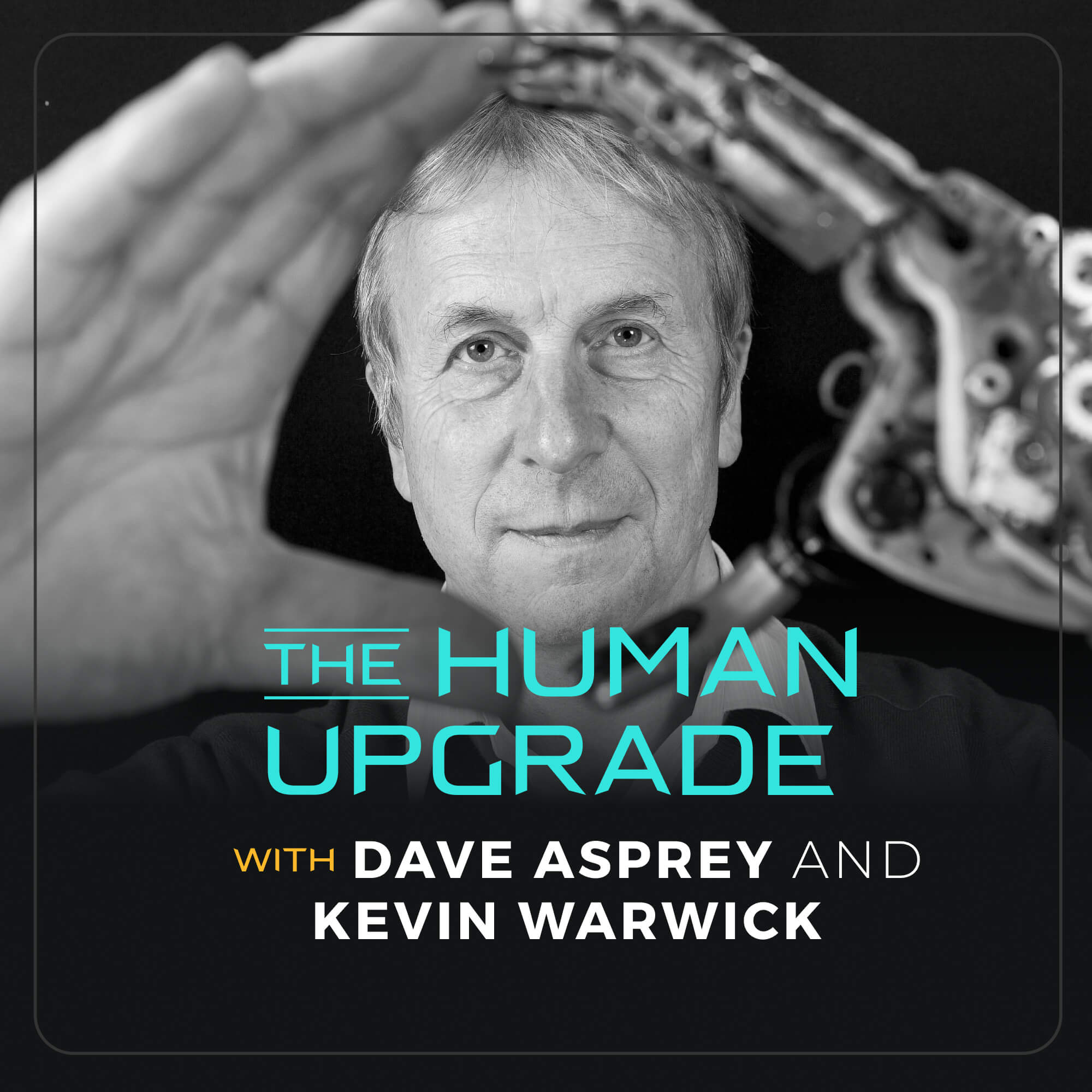

1106. Human 2.0: The Cyborg Revolution Is Here

We’re delving into the world of cybernetics and the potential cyberpunk future with a true pioneer and a living legend in the field, Kevin Warwick. We explore Kevin’s history of pushing the boundaries of human potential by integrating technology with biology, and what the future holds.

Subscribe To The Human Upgrade

In this Episode of The Human Upgrade™...

Today, we delve deep into the world of cybernetics and the potential cyberpunk future with a true pioneer and a living legend in the field, Kevin Warwick. As an Emeritus professor at Coventry and Reading Universities, Kevin is celebrated as the world’s first cyborg, earning him the moniker: Captain Cyborg.

In this episode, we explore the incredible journey of Kevin Warwick, who became a cyborg over 25 years ago by implanting a chip in his arm, the size of a coin, enabling him to interact with the digital world in ways that were once unimaginable. But this isn’t just about becoming a cyborg; it’s about the potential, the risks, and the opportunities at the intersection of AI, robotics, and biomedical engineering. In doing so, we discuss the fascinating implications of merging humans with technology and how it can reshape our understanding of reality.

Kevin’s fearless exploration into the world of cybernetics is truly pioneering. He has been willing to take risks to expand our knowledge and possibilities as humans. Join us as we explore the profound implications of merging biology with technology, opening up new realms of human potential and perception, and what our future could hold as technology and research continues to progress.

“The scientist I am, and was, is somebody who tries to push the boundaries a little bit.”

Kevin Warwick

(00:01:43)

The Realities of Pioneering Human Enhancement

- The paradoxes involved in the evolution of humanity through innovative research

- The ethics of building technology that you know could be used for good or bad

- Is Kevin still wearing the implant?

- The shortage of accountability for those who do bad things with technology in government

(00:10:05)

Kevin’s Implantation Experiment & Research

- What happened when he connected his nervous system to the internet

- Using BrainGate electrodes

- The lack of progression in neuroscience since his experiment

- What it felt like to inject a current into his nervous system

(00:18:10)

Expanding Our Biological “Software”

- Bruce Sterling cyberpunk books

- Musing on potential neurological upgrades like a digital compass or tongue printer

- Experimenting with an infrared brain stimulator

(00:24:39)

How Far Is Too Far? Risk Tolerance for the Sake of Science

- Hacking the communications network between cells and voltage-gated calcium channels

- Concerns with EMFs and implants

- Taking risks in order to further science

- Considering the risks of not progressing the science

(00:32:47)

Exploring Human Enhancement Possibilities

- Human enhancement using electricity

- P300D, the EEG measure: a lag time on reality

- Possibilities and potential risks for enhancing human communication

(00:39:21)

Kevin’s Take on Longevity From His Brain Cell Research

- The key to longevity according to Kevin

- Experimenting with brain cells to influence longevity

- The heart-body-mind-gut connection in the brain

(00:43:13)

Biohacking As An Entry Level Point Into Cyborgs

- TedTalk: What Is It Like To Be A Cyborg? By Kevin Warwick

- Kevin’s opinion on the Grinder movement

- Dave’s take on implants and safeguards needed for that technology

(00:52:41)

The Future of Cyborgs, Humans & Artificial Intelligence

- How far will AI go in the future?

- Possible dangers dangerous in the field of AI

- Cyborg possibilities in 25 years

- Meeting Jeff Bezos at the World Economic Forum

- Augmented filtering with AI and creating antivirus software for our minds

- Metaphysical applications for exploring the universe

- Opportunities for creating brain-computer interfaces

Enjoy the show!

LISTEN:

“Follow” or “subscribe” to The Human Upgrade™ with Dave Asprey on your favorite podcast platform.

REVIEW:

Go to Apple Podcasts at daveasprey.com/apple and leave a (hopefully) 5-star rating and a creative review.

FEEDBACK:

Got a comment, idea or question for the podcast? Submit via this form!

SOCIAL:

Follow @thehumanupgradepodcast on Instagram and Facebook.

JOIN:

Learn directly from Dave Asprey alongside others in a membership group: ourupgradecollective.com.

Subscribe To The Human Upgrade

Similar Episodes

BOOKS

4X NEW YORK TIMES

BEST-SELLING SCIENCE AUTHOR

Smarter

Not Harder

Smarter Not Harder: The Biohacker’s Guide to Getting the Body and Mind You Want is about helping you to become the best version of yourself by embracing laziness while increasing your energy and optimizing your biology.